Palantir, the data analytics company known for working with intelligence agencies and law enforcement, is in talks with the IRS to help determine who gets audited. If you find that concerning, you're not alone.

According to documents obtained by Wired, the IRS is exploring using Palantir's data integration and analysis platforms to identify potential tax fraud, particularly around clean energy credits. On the surface, that sounds reasonable—use advanced analytics to catch fraudsters and ensure compliance. The problem is what happens when you give a surveillance company access to financial data for hundreds of millions of Americans.

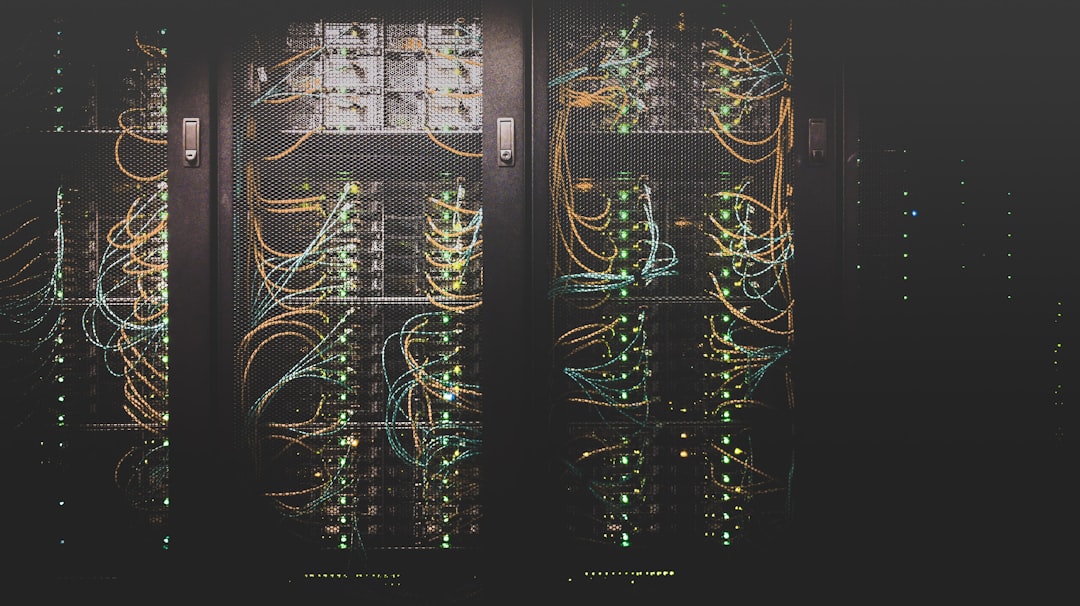

Palantir specializes in connecting disparate data sources and finding patterns humans would miss. Their Gotham platform was originally built for counterterrorism, linking intelligence from multiple agencies to identify threats. Now the IRS wants to point that capability at taxpayers.

The technical capability is impressive. The civil liberties implications are terrifying.

Here's what could go wrong: Algorithmic bias. If the system is trained on historical audit data, it will inherit the biases of past enforcement—meaning people of color, small business owners, and lower-income taxpayers could face disproportionate scrutiny. The IRS has already faced criticism for auditing poor Americans at higher rates than wealthy ones because those audits are cheaper and easier.

Then there's transparency. Palantir's algorithms are proprietary. If their system flags you for audit, you won't know why. You can't appeal to an algorithm. You can't question its reasoning. You just get audited because a black box said so.

The IRS defends the approach by pointing to genuine problems. Billions in clean energy tax credits are being claimed, and some are fraudulent. Using advanced analytics to identify suspicious patterns makes sense. But there's a difference between flagging anomalies for human review and automating enforcement decisions.

Where you draw that line matters enormously. If Palantir's system suggests which returns deserve closer scrutiny, that's one thing. If it effectively determines who gets audited based on algorithmic probability scores, that's something else—and significantly more concerning.