OpenAI is planning to allow sexting with ChatGPT through a new "Adult Mode." Before anyone gets excited or outraged, let's talk about what this actually means: you'll be sharing your most intimate thoughts and desires with a company that stores every conversation and is subject to government data requests.

The technical capability makes sense. Large language models can engage in intimate conversations - they're trained on vast amounts of human text, including romantic and sexual content. OpenAI has been restricting this capability, but competitors without such guardrails have shown there's market demand.

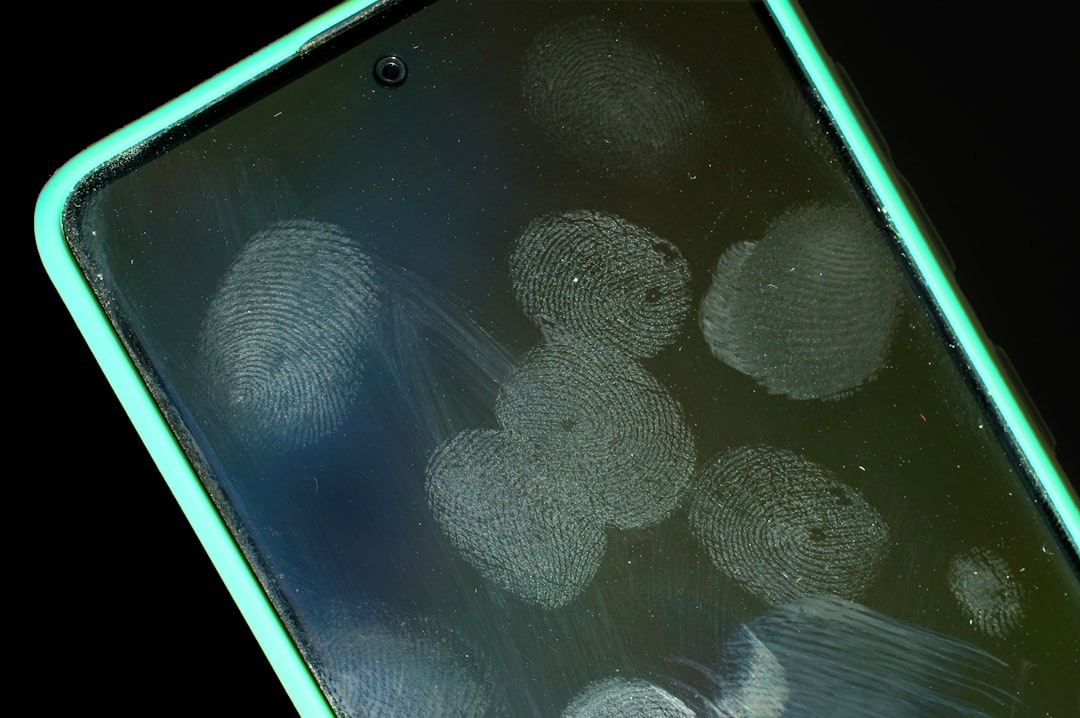

What's concerning isn't the technology - it's the data trail. Every message you send to ChatGPT is logged, analyzed, and stored. That includes whatever intimate conversations you might have in Adult Mode. This data is subject to legal requests, potential breaches, and future policy changes you can't anticipate.

When I was building in fintech, we obsessed over data security because financial information is sensitive. But intimate conversations might be even more sensitive. The difference is that banks have regulatory frameworks governing how they handle your data. AI chat services largely don't.

OpenAI's privacy policy gives them broad latitude to use conversation data to improve their models. That means your intimate chats could become training data. They claim to anonymize it, but researchers have repeatedly shown that "anonymized" data can often be de-anonymized with enough context.

There's also the question of what happens during a security breach. OpenAI has good security, but every company eventually gets hacked. When that happens, do you want your intimate AI conversations leaked? Because that's not hypothetical - it's a matter of when, not if.

The human-AI interaction experts warning about this aren't being prudish. They're pointing out that intimate AI creates unprecedented privacy risks. When you share intimate thoughts with another person, that's one relationship to manage. When you share them with a company, you're trusting not just the AI, but the entire corporate entity, its employees, its security practices, and its legal obligations.

Some will argue that consenting adults should be free to interact with AI however they want. Fair enough. But consent requires understanding what you're consenting to. Most users don't realize their conversations are being logged, analyzed, and potentially used as training data.

OpenAI could build Adult Mode with stronger privacy protections - end-to-end encryption, no logging, no training data usage. They probably won't, because that data is valuable and their business model depends on training better models.

The result is a feature that will inevitably generate intimate surveillance data on a massive scale. Not because OpenAI is malicious, but because collecting data is how the business works.

If you use Adult Mode, understand what you're trading. You're getting an intimate conversational experience. You're giving up intimate conversational privacy. That's not necessarily a bad trade, but it should be an informed one.