Walk through a California mall, a New York neighborhood, or increasingly anywhere in America, and you'll spot them: high-tech "scarecrows" that are really sophisticated surveillance robots. They're spreading rapidly, deployed by private security companies with minimal oversight about what they record or who sees the footage.

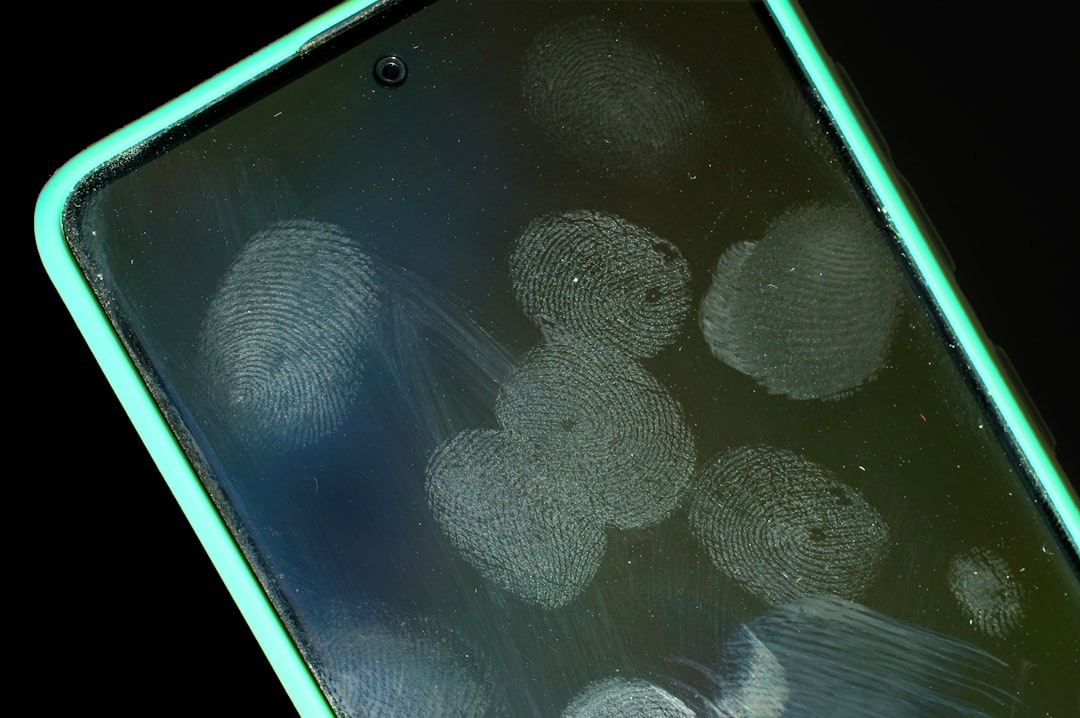

The devices look innocuous - roughly the size and shape of a large trash can, mounted on wheels. They're equipped with cameras, sensors, and increasingly AI-powered analysis capabilities. Some can recognize faces. Others detect "anomalous behavior." Most are recording continuously.

The deployment pattern is telling. These robots appear first in malls and parking lots - private property where constitutional protections are weaker. Then they migrate to public sidewalks and neighborhoods. Property owners love them because they're cheaper than human security guards. Civil liberties advocates hate them because there's virtually no regulation governing their use.

What makes these devices particularly problematic is the accountability gap. When police deploy surveillance cameras, there are at least nominal oversight mechanisms - public records requests, civilian review boards, constitutional constraints. When private companies deploy robots that look like adorable Wall-E cousins but act like roaming surveillance systems, those protections largely disappear.

The data these devices collect doesn't stay on the robot. It's uploaded to cloud servers operated by security companies you've never heard of, under privacy policies you've never read. Some companies sell or share data with law enforcement. Others retain footage indefinitely. Most won't tell you which category they're in.

From a technical standpoint, the capabilities are advancing faster than policy. Early models just recorded video. Current systems can track individuals across multiple cameras, flag "suspicious" behavior using AI, and alert human operators to perceived threats. The problem: AI-powered threat detection is notoriously biased and error-prone.

The business model is subscription-based security-as-a-service. Property owners pay monthly fees for robotic patrols. The security company provides the hardware, maintains the AI systems, and stores the data. It's surveillance infrastructure without the capital costs.

California has started pushing back with regulations requiring transparency about where surveillance devices are deployed and what data they collect. But most states have no such requirements. The default is: if a private company deploys it on private property, it's largely unregulated.

Some cities have banned face recognition technology on these devices. That's good, but it doesn't address the fundamental issue - continuous surveillance is problematic regardless of whether faces are being identified. The presence of recording devices changes behavior, creates chilling effects, and enables tracking that wasn't possible with human security guards.

The companies deploying these robots argue they're just providing security. That's partly true. They also happen to be building a distributed surveillance network with minimal accountability, governed by private contracts rather than constitutional protections.

The technology will keep spreading because the economics work. What's unclear is whether policy will catch up before these devices become ubiquitous and the surveillance society they enable becomes normalized.