Thousands of hastily-built AI applications are exposing corporate and personal data on the public internet, and developers are calling it 'vibe-coding' - building with AI assistance without understanding the underlying security implications. A WIRED investigation found a new class of catastrophic security vulnerabilities born from the democratization of development.

Vibe-coding is exactly what it sounds like. Developers describe their desired functionality to an AI coding assistant, get back working code, and ship it without actually understanding what the code does. The apps work - they just leak everything.

The investigation found databases exposed without authentication, API keys committed to public repositories, customer data searchable on Google, internal corporate documents accessible via direct URL. These aren't sophisticated attacks - they're the most basic security mistakes imaginable, replicated at scale across thousands of AI-generated applications.

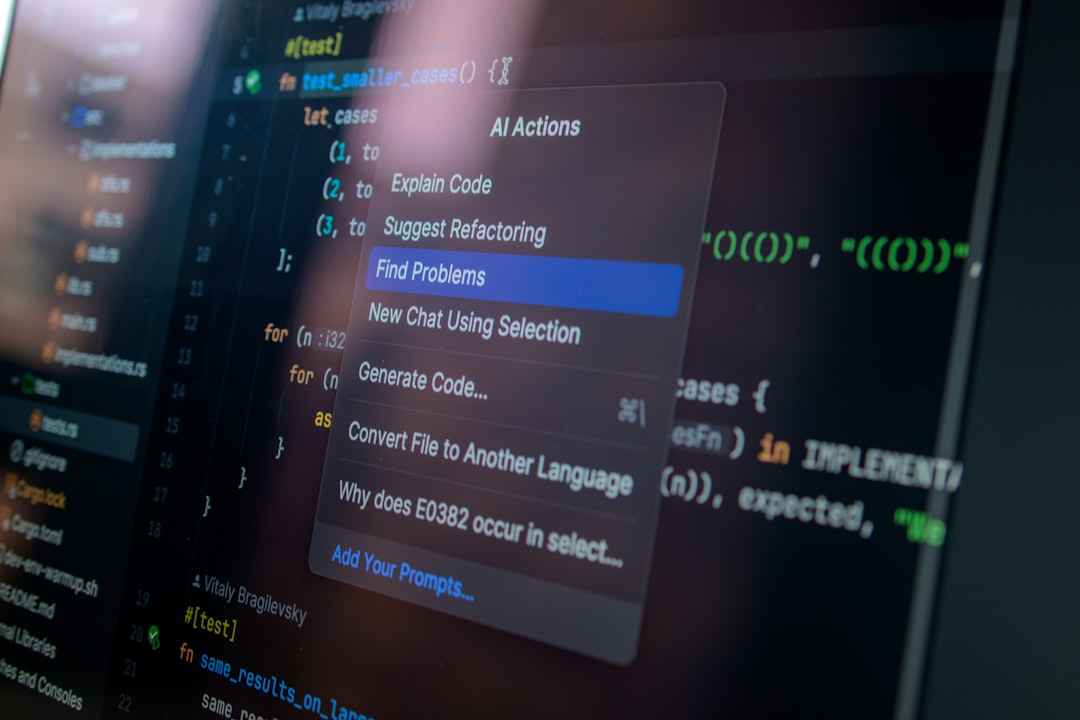

Here's what's happening technically. AI coding assistants are trained on public code repositories, which are full of security anti-patterns and vulnerable examples. When you ask ChatGPT or Copilot to build a user authentication system, it'll give you something that works. But 'works' and 'is secure' are very different standards, and the AI doesn't distinguish between them.

The exposure is happening in production systems at real companies. Healthcare portals leaking patient records. SaaS applications exposing customer databases. Internal business intelligence tools publicly searchable. These aren't hobby projects - they're revenue-generating applications built by developers who genuinely didn't know they were creating security disasters.

The AI coding boom has created an entire generation of developers who can build functional applications without understanding security fundamentals. They know how to prompt an AI. They don't know what SQL injection is, or why hard-coded credentials are bad, or how authentication actually works. The apps ship anyway.

From a security researcher's perspective, this is a gold mine. Thousands of vulnerable applications appearing daily, all with similar patterns of mistakes. Automated scanning tools can find exposed databases faster than developers can secure them. It's like Shodan for the AI era - except the exposure is accidental, not intentional.

The companies affected are scrambling to respond. Some are conducting emergency security audits of AI-generated code. Others are implementing mandatory security review processes for all AI-assisted development. A few are banning AI coding tools entirely, which seems like fighting the tide.