Anthropic quietly doubled its cost estimates for engineers using Claude Code, the company's AI-powered coding assistant—and the timing raises uncomfortable questions about AI business models and unit economics.

According to Business Insider, the company revised its token usage projections significantly upward after the product's initial launch. That's a red flag. When a company doubles cost estimates post-launch, it signals either poor planning or a fundamental problem with the underlying economics.

Neither option inspires confidence.

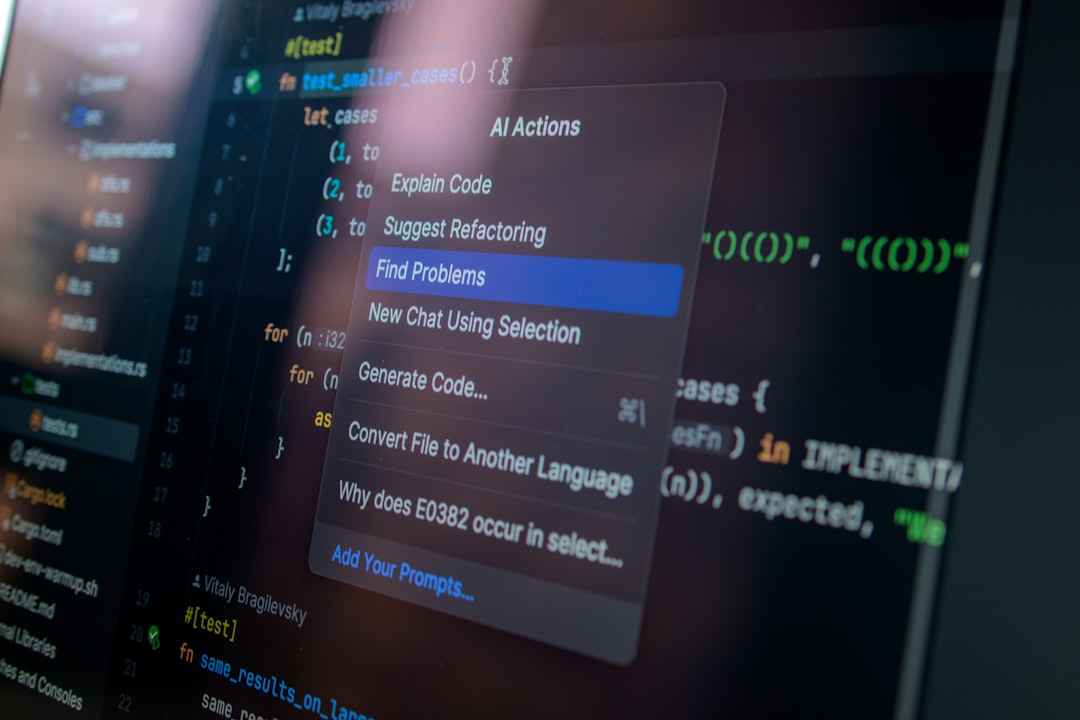

Claude Code competes directly with GitHub Copilot, Cursor, and other AI coding tools in an increasingly crowded market. These tools promise to accelerate development, reduce debugging time, and help engineers write better code faster. But they're expensive to run—processing code requires significant compute resources, and those costs add up quickly at scale.

Here's why doubling cost estimates matters: it suggests Anthropic underestimated how much developers would actually use the tool, or how expensive it would be to serve those requests, or both. For a company backed by billions in funding from Google, Amazon, and others, that's a concerning miss.

This isn't just an Anthropic problem—it's an AI industry problem. The entire sector is grappling with the gap between hype and profitability. OpenAI reportedly loses money on ChatGPT usage. Microsoft is subsidizing Copilot adoption to drive cloud consumption. The economics don't pencil out yet for most players.

From a customer perspective, doubling cost estimates means either higher subscription fees down the road or reduced service levels. Enterprises evaluating AI coding tools need to account for pricing volatility as vendors figure out their actual unit economics.

The broader narrative about AI profitability is starting to crack. Investors have poured tens of billions into AI infrastructure and applications based on assumptions about usage patterns, compute efficiency, and pricing power. When leading players like Anthropic have to revise their cost models upward by 100%, it suggests those assumptions need serious scrutiny.

For developers and engineering teams, the lesson is clear: lock in pricing where possible, build switching costs into your procurement strategy, and don't assume today's subsidized rates will last. These tools are incredibly useful, but the companies providing them are still figuring out how to make money.

Wall Street is watching. AI stocks have been on a tear, driven by expectations that these companies will eventually achieve profitable scale. Cost estimate revisions—especially quiet ones that double the original projections—undermine that thesis.

Anthropic hasn't provided detailed public explanation for the cost revision, which is typical for private companies but leaves customers and observers guessing about root causes. Was it higher-than-expected usage? More expensive infrastructure? Model complexity that required more compute per request?

Whatever the reason, doubling your cost estimates isn't a sign of operational excellence. It's a sign that reality proved more expensive than your models predicted—and in venture-backed AI, where burn rates already rival small countries' GDP, that's a problem that compounds quickly.