The AI infrastructure boom is now consuming CPUs at unprecedented rates, extending beyond the well-documented memory shortage. Data centers are buying up server-grade processors faster than manufacturers can produce them, creating supply chain pressure across the entire industry.

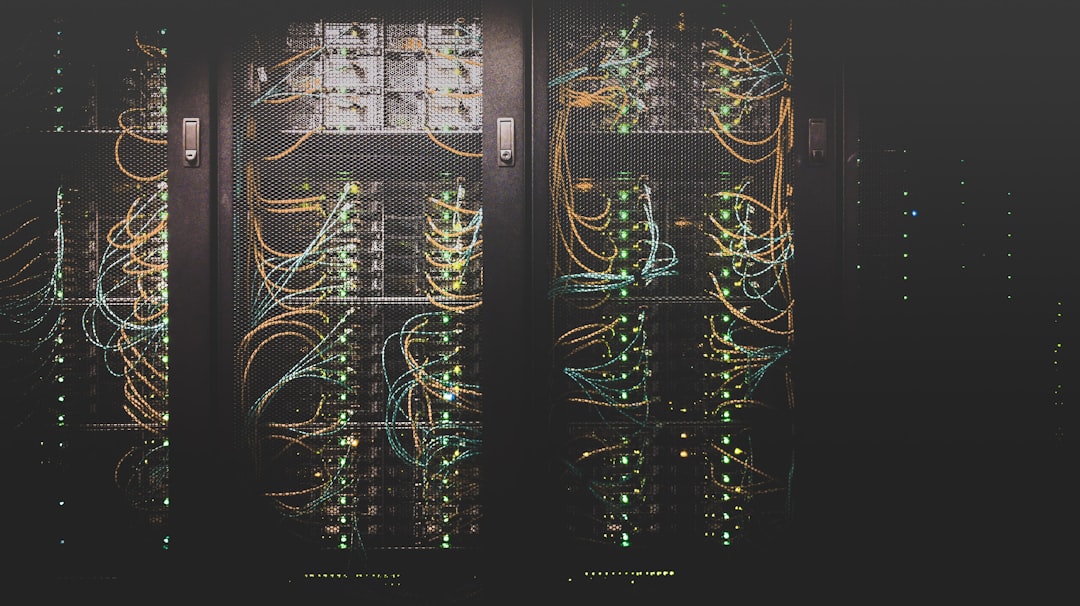

We've been focused on GPU scarcity for months—everyone knows you can't get your hands on NVIDIA H100s without a long wait and deep pockets. But the entire compute stack is getting squeezed. AI workloads don't just need GPUs for training and inference; they need massive CPU capacity for data preprocessing, serving, orchestration, and everything else that makes AI systems actually run in production.

The result is a supply crunch that affects everyone from cloud providers trying to expand capacity to enterprises building out their own infrastructure. Lead times for server-grade CPUs have stretched from weeks to months. Prices are climbing.

This matters because it's not just about AI companies competing for resources. It's about the opportunity cost for everyone else. When Silicon Valley giants are hoovering up every available processor for AI data centers, there's less supply for cloud providers serving traditional workloads, for companies building out their own infrastructure, for anyone who needs compute.

The chip shortage during COVID taught us that semiconductors are infrastructure, and infrastructure constraints ripple through the entire economy. We're seeing that again, except this time it's not pandemic-related supply chain disruptions—it's AI-driven demand outpacing production capacity.

The technology is impressive. The question is whether we're building data center capacity sustainably or creating the next constraint that crashes into reality when the music stops.

As one industry observer put it: "We solved the GPU shortage by throwing money at it. Now we're discovering that won't work for the entire stack."