The AI reckoning is coming, according to a new analysis from enterprise consultants who've been inside the hype machine. And the core problem isn't that the technology doesn't work - it's that nobody actually knows how to use it effectively, but everyone's been pretending they do.

Dorian Smiley from Codestrap puts it bluntly: "No one knows right now what the right reference architectures or use cases are" and "A lot of people are pretending that they know." That's consultant-speak for "we've sold a lot of AI solutions that aren't actually solving problems."

The specific failures are telling. An AI-generated Rust replacement for SQLite produced 3.7x more code while performing 2,000 times worse. It passed tests. It looked legitimate. It was fundamentally broken. That's not a one-off - it's representative of a broader pattern where AI output appears plausible but collapses under real-world use.

Or take Deloitte having to refund the Australian government after AI-generated report errors. These aren't startups fumbling with new technology - these are established consultancies deploying AI to clients and discovering it doesn't actually work as advertised.

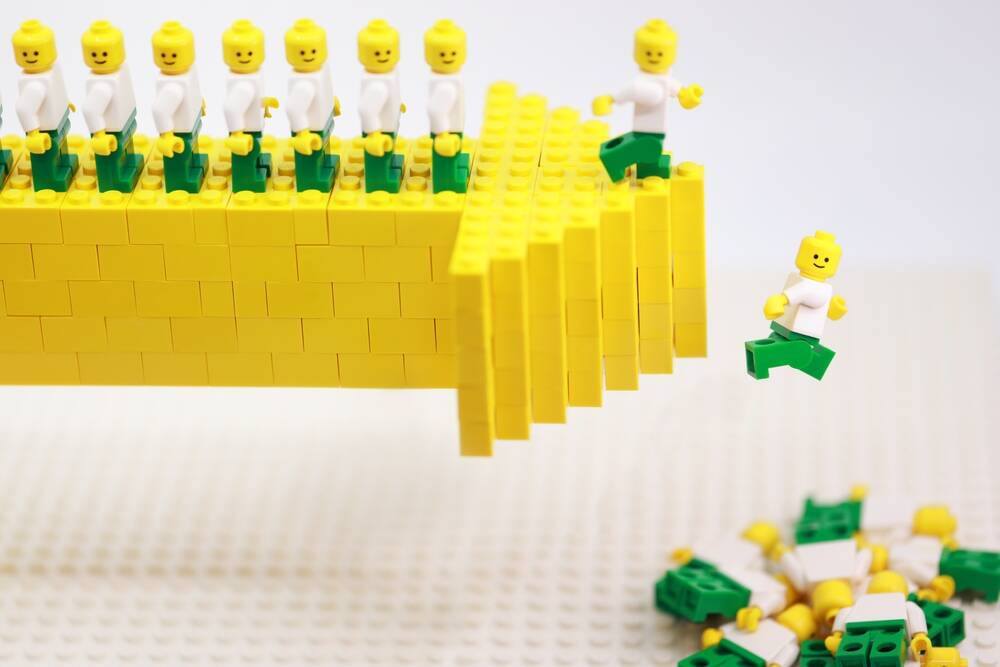

The measurement problem runs deeper than individual failures. Companies are tracking the wrong metrics entirely - lines of code, number of pull requests, development velocity. As Smiley notes, "Lines of code, number of pull requests, these are liabilities." AI can generate tons of code very quickly. That doesn't mean the code is good, maintainable, or actually solves the right problems.

The technology is impressive. The question is whether anyone actually needs most of what it produces. When your AI coding assistant generates hundreds of lines that look plausible but introduce subtle bugs, are you saving time or creating future problems?

The predicted reckoning looks like this: eight to nine months of mounting quality crises for organizations that went heavy on AI tools. Legal liability from bad AI-generated business advice. Clients demanding discounts when they learn services used AI. And most tellingly, insurance companies removing AI coverage from liability policies.